The robots.txt (robots exclusion standard) file is a file located in the root of a web server which tells web crawlers what they are allowed to crawl, it can be used to protect or hide folders and sections from your website if you want to prevent inclusion in a search engine. This is useful for areas of a website that should not be public.

When a web crawler, robot or spider connects to a web site it will first check for a robots.txt file and if one is present it will follow the rules included in the file, the following are some of the common ways to use a robots.txt file.

User-Agent: * (tells robots that the section applies to them)

Disallow: /folder (tells a robot that the folder or file may not be crawled and indexed)

What this means is that you can grant different access to different robots by using the user-agent string, for example you could allow google to crawl everything but prevent unknown spiders from crawling your site.

You can also place the location of your sitemap in the robots.txt file to point robots in the right direction, most modern web crawlers will use the sitemap to crawl your site if the location is provided in the robots.txt file.

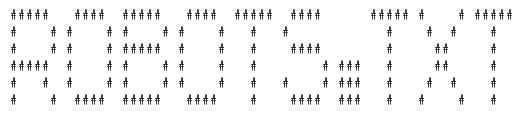

As an example of a robots.txt file with some advanced concepts lets use the following;

Sitemap: http://www.yoursite.com/sitemap.xml

User-agent: googlebot

Disallow: /administrator/

Disallow: /cache/

Disallow: /components/

Disallow: /images/

Allow: /images/stories/

User-agent: *

Disallow: /administrator/

Disallow: /cache/

Disallow: /components/

Disallow: /images/

To explain, what we have done here is included our sitemap for all crawlers but then we have used the user agent and an allow rule to give a search engine permission to crawl a subfolder in a disallowed directory. In this case we gave googlebot permission to crawl the /stories/ subfolder but not other crawlers. Not all spiders understand the override directive so be careful when using it. If your site is submitted to the Google webmaster tools you will be able to see potential errors and check your syntax.

As mentioned the robots.txt file should be placed in the root of your public web server, on Linux hosted servers that would be /public_html/. If you do not have access to upload a robots.txt to your server your will have to use the robots meta tag which will provide the next best solution however keep in mind that many robots will ignore the meta tag.